Difference between revisions of "Linux shell/Commands"

| Line 626: | Line 626: | ||

wget -qO- https://raw.githubusercontent.com/pio2pio/project/master/setup_sh | sudo -E bash - | wget -qO- https://raw.githubusercontent.com/pio2pio/project/master/setup_sh | sudo -E bash - | ||

</source> | </source> | ||

== Run local scripts remotly == | |||

Use the <code>-s</code> option, which forces bash (or any POSIX-compatible shell) to read its command from standard input, rather than from a file named by the first positional argument. All arguments are treated as parameters to the script instead. If you wish to use option parameters like eg. <code>--silent true</code> make sure you put <code>--</code> before arg so it is interpreted as an argument to test.sh instead of bash. | |||

<source lang="bash"> | |||

ssh user@remote-addr 'bash -s arg' < test.sh | |||

ssh user@remote-addr bash -sx -- -p am -c /tmp/conf < ./support/scripts/sanitizer.sh | |||

# -- signals the end of options and disables further option processing. | |||

# any arguments after the -- are treated as filenames and arguments | |||

# -p am -c /tmp/conf -these are arguments passed onto the sanitizer.sh script | |||

</source> | |||

References | |||

*[https://unix.stackexchange.com/questions/87405/how-can-i-execute-local-script-on-remote-machine-and-include-arguments how-can-i-execute-local-script-on-remote-machine-and-include-arguments] | |||

== Scripts - solutions == | == Scripts - solutions == | ||

Revision as of 08:25, 15 August 2018

One liners

df -h #disk free shows available disk space for all mounted partitions du -skh * | sort -h #disk usage summary for each directory and sort using human readable size free -m #displays the amount of free and used memory in the system lsb_release -a #prints version information for the Linux release you're running tload -draws #system load on text based graph

Copy with progress bar

- rsync and cp

- rsync -aP - copy with progress can be also aliased alias cp='rsync -aP'

- cp -rv old-directory new-directory - shows progress bar

- PV does not preserve permissions and does not handle attributes

- pv ~/kali.iso | cat - /media/usb/kali.iso equals cp ~/kali.iso /media/usb/kali.iso

- pv ~/kali.iso > /media/usb/kali.iso equals cp ~/kali.iso /media/usb/kali.iso

- pv access.log | gzip > access.log.gz shows gzip compressing progress.

PV can be imagined as CAT command piping '|' output to another command with a bar progress and ETA times. -c makes sure one pv output is not use to write over to another, -N creates a named stream. Find more at How to use PV pipe viewer to add progress bar to cp, tar, etc..

$ pv -cN source access.log | gzip | pv -cN gzip > access.log.gz source: 760MB 0:00:15 [37.4MB/s] [=> ] 19% ETA 0:01:02 gzip: 34.5MB 0:00:15 [1.74MB/s] [ <=> ]

Copy files between remote systems quick

List SSH MACs, Ciphers, and KexAlgorithms

ssh -Q cipher; ssh -Q mac; ssh -Q kex

Rsync

rsync -OPTION SOURCE DEST rsync -axvPW user@remote-srv:/remote/path/from /local/path/to rsync -axvPWz user@remote-srv:/remote/path/from /local/path/to #compresses before transfer

Rsync over ssh

rsync -avPW -e ssh $SOURCE $USER@$REMOTE:$DEST rsync -avPW -e ssh /local/path/from remote@server.com:/remote/path/to

-e, --rsh=COMMAND -specify the remote shell to use -P --progress -progress -a, --archive -archive mode; equals -rlptgoD (no -H,-A,-X) -v, --verbose -W, --whole-file -copy files whole (w/o delta-xfer algorithm) -x, --one-file-system -don't cross filesystem boundaries -z -compress files before transfer, consumes ~70% of CPU

Tar over ssh

Copy from a local server (data source) to a remote server. It TARs a folder but we do not specify an archive name "-" so it redirects (tar stream) via the pipe "|" to ssh, where extracts the tarball at the remote server.

tar -cf - /path/to/dir | ssh user@remote-srv-copy-to 'tar -xvf - -C /path/to/remotedir'

Coping from local server (the data source) to a remote server as a single compressed .tar.gz file

tar czf - -C /path/to/source files-and-folders | ssh user@remote-srv-copy-to "cat - > /path/to/archive/backup.tar.gz"

Coping from a remote server to local server (where you execute the command). This will execute tar on remote server and redirects "-" to STDOUT to extract locally.

ssh user@remote-srv-copy-from "tar czpf - /path/to/data" | tar xzpf - -C /path/to/extract/data

-c; -f --file; - -create a new archive; archive name; 'dash' means STDOUT - -redirect to STDOUT -C, --directory=DIR -change to directory DIR, cd to the specified directory at the destination -x -v -f -extract; -dispaly files on a screen; archive_name

- References

Listing a directory in the form of a tree

$ tree ~ $ ls -R | grep ":$" | sed -e 's/:$//' -e 's/[^-][^\/]*\//--/g' -e 's/^/ /' -e 's/-/|/' $ alias lst='ls -R | grep ":$" | sed -e '"'"'s/:$//'"'"' -e '"'"'s/[^-][^\/]*\//--/g'"'"' -e '"'"'s/^/ /'"'"' -e '"'"'s/-/|/'"'" $ ls -R | grep ":$" | sed -e 's/:$//' -e 's/[^-][^\/]*\// /g' -e 's/^/ /' #using spaces, doesn't list .git

A directory statistics: size, files count and files types based on an extension

find . -type f | sed 's/.*\.//' | sort | uniq -c | sort -n | tail -20; echo "Total files: " | tr --delete '\n'; find . -type f | wc -l; echo "Total size: " | tr --delete '\n' ; du -sh

Constantly print tcp connections count in line

while true; do echo -n `ss -at | wc -l`" " ; sleep 3; done

Stop/start multiple services at the same time

cd /etc/init.d for i in $(ls servicename-*); do service $i status; done for i in $(ls servicename-*); do service $i restart; done

Unlock a user on the FTP server

pam_tally2 --user <uid> #this will show you the number of failed logins pam_tally2 --user <uid> --reset #this will reset the count and let the user in

Tail log files

tail-f-the-output-of-dmesg or install multitail

tail -f /var/log/{messages,kernel,dmesg,syslog} #old school but not perfect

less +F /var/log/syslog #equivalent tail -f but allows for scrolling

watch 'dmesg | tail -50' # approved by man dmesg

watch 'sudo dmesg -c >> /tmp/dmesg.log; tail -n 40 /tmp/dmesg.log' #tested, but experimental

- less

- multifile monitor to see what’s happening in the second file, you need to first

Ctrl-cto go to normal mode, then type:nto go to the next buffer, and thenFagain to go back to the watching mode.

Big log files

Clear a file content

Therefore clearing logs from this location, will release space on / partition

cd /chroot/httpd/usr/local/apache2/logs > mod_jk.log #zeroize the file

Clear a part of a file

You can use time commands to measure time lapsed to execute the command

$ wc -l catalina.out #count lines

3156616 catalina.out

$ time split -d -l 1000000 catalina.out.tmp catalina.out.tmp- #split tmp file every 1000000th line prefixing files

#with catalina.out.tmp-##, -d specify ## numeric sequence

$ time tail -n 300000 catalina.out > catalina.out.tmp #creates a copy of the file with 300k last lines $ time cat catalina.out.tmp > catalina.out #clears and appends tmp file content to the current open file

Locked file by a process does not release free space back to a file system

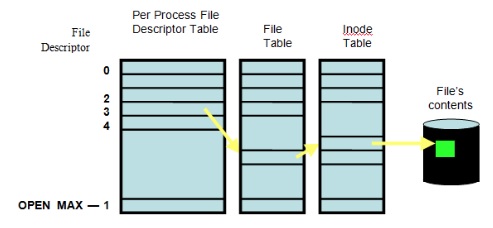

When you delete a file you in fact deleting an inode pointer to a disk block. If there is still a file handle attached to it the files system will not see the file but the space will not be freed up. One way to re evaluate the space is to send HUP signal to the process occupying the file handle. This is due to how Unix works, if a process is still using a file - the system system shouldn't be trying to get rid of it.

kill -HUP <PID>

Diagram is showing File Descriptor (fd) table, File table and Inode table, finally pointing to a block device where data is stored.

- Search for deleted files are still held open.

$ lsof | grep deleted COMMAND PID TID USER FD TYPE DEVICE SIZE/OFF NODE NAME mysqld 2230 mysql 4u REG 253,2 793825 /var/tmp/ibXwbu5H (deleted) mysqld 2230 mysql 5u REG 253,2 793826 /var/tmp/ibfsqdZz (deleted)

$ lsof | grep DEL COMMAND PID TID USER FD TYPE DEVICE SIZE/OFF NODE NAME httpd 54290 apache DEL REG 0,4 32769 /SYSV011246e6 httpd 54290 apache DEL REG 0,4 262152 /SYSV0112b646

Timeout commands after certain time

Ping multiple hosts and terminate ping after 2 seconds, helpful when a server is behind firewall and no responses to ICMP returns

$ for srv in `cat nodes.txt`;do timeout 2 ping -c 1 $srv; done

Time manipulation

Replace unix timestamps in logs to human readable date format

user@laptop:/var/log$ tail dmesg | perl -pe 's/(\d+)/localtime($1)/e' [ Thu Jan 1 01:00:29 1970.168088] b43-phy0: Radio hardware status changed to DISABLED [ Thu Jan 1 01:00:29 1970.308597] tg3 0000:09:00.0: irq 44 for MSI/MSI-X [ Thu Jan 1 01:00:29 1970.344378] IPv6: ADDRCONF(NETDEV_UP): eth0: link is not ready [ Thu Jan 1 01:00:29 1970.344745] IPv6: ADDRCONF(NETDEV_UP): eth0: link is not ready user@laptop:/var/log$ tail dmesg [ 29.168088] b43-phy0: Radio hardware status changed to DISABLED [ 29.308597] tg3 0000:09:00.0: irq 44 for MSI/MSI-X [ 29.344378] IPv6: ADDRCONF(NETDEV_UP): eth0: link is not ready [ 29.344745] IPv6: ADDRCONF(NETDEV_UP): eth0: link is not ready

Convert seconds to human readable

sec=4717.728; eval "echo $(date -ud "@$sec" +'$((%s/3600/24))d:%-Hh:%Mm:%Ss')" # '-' hyphen at %-H it means do not pad 0d:1h:18m:37s

- References

Sed - Stream Editor

Replace, substitute

sed -i 's/pattern/substituteWith/g' ./file.txt #substitutes ''pattern'' with ''substituteWith'' in each matching line sed 's/A//g' #substitutes 'A' with an empty string '/g' - globally; all it means it will remove 'A' from whole string # s/ -substitute # /w substitute and write matching patterns into a file sed 's/pattern/substituteWith/w matchingPattern.txt' ./file.txt

Substitute every file grepp'ed (-l returns a list of matched files)

for i in `grep -iRl 'dummy is undefined or' *`;do sed -i 's/dummy is undefined or/dummy is defined and/' $i; sleep 1; done

Replace a string between XML tags. It will find String Pattern between <Tag> and </Tag> then it will substitute /s findstring with replacingstring globally /g within the Pattern String.

sed -i '/<Tag>/,/<\/Tag>/s/findstring/replacingstring/g' file.xml

Replace 1st occurance

sed '0,/searchPattern/s/searchPattern/substituteWith/g' ./file.txt #0,/searchPattern -index0 of the pattern first occurrence

Remove all lines until first match

sed -r -i -n -e '/Dynamic,$p' resolv.conf #-r regex, -i inline, so no need to print lines(quiet,silient) #-e expression, $p print to the EOFile

Regex search and remove (replace with empty string) all xml tags.

sed 's/<[^>]*>//' ./file.txt # [] -regex characters class, [^>] -negate, so following character is not > and <> won't be matched,

References

Sed manual Official GNU

Awk - language for processing text files

Remove duplicate keys from known_host file

awk '!seen[$0]++' ~/.ssh/known_hosts > /tmp/known_hosts; mv -f /tmp/known_hosts ~/.ssh/

Useful packages

- ARandR Screen Layout Editor - 0.1.7.1

Add user to a group

In ubuntu adding a user to group admin will grant the root privileges. Adding them to sudo group will allow to execute any command

sudo usermod -a -G nameofgroup nameofuser #requires to login again

In RedHat/CentOS add a user to a group 'wheel' to grant him sudo access

sudo usermod -a -G wheel nameofuser #requires to login again

Change user primary group and other groups

To assign a primary group to an user:

$ sudo usermod -g primarygroupname username

To assign secondary groups to a user (-a keeps already existing secondary groups intact otherwise they'll be removed):

$ sudo usermod -a -G secondarygroupname username

From man-page:

... -g (primary group assigned to the users) -G (Other groups the user belongs to) -a (Add the user to the supplementary group(s)) ...

Show USB devices

lsusb -t #shows USB tree

Copy and Paste in terminal

In Linux X graphical interface this works different then in Windows you can read more in X Selections, Cut Buffers, and Kill Rings. When you select some text this becomes the Primary selection (not the Clipboard selection) then Primary selection can be pasted using the middle mouse button. Note however that if you close the application offering the selection, in your case the terminal, the selection is essentially "lost".

Option 1 works in X

- select text to copy then use your mouse middle button or press a wheel

Option 2 works in Gnome Terminal

- Ctrl+Shift+C - copy

- Ctrl+Shift+V or Shift+Insert - paste

Option 3 Install Parcellite GTK+ clipboard manager

sudo apt-get install parcellite

then in the settings check "use primary" and "synchronize clipboards"

Generate random password

cat /dev/urandom|tr -dc "a-zA-Z0-9"|fold -w 48|head -n1 openssl rand -base64 24

Localization - Change a keyboard layout

setxkbmap gb

at, atd - schedule a job

At can execute command at given time. It's important to remember 'at' can only take one line and by default it uses limited /bin/sh shell.

service atd status #check if at demon is running at 01:05 AM atq -list job queue at -c <job number> -cat the job_number atrm <job_number> -deletes job mail -at command emails a user who scheduled a job with its output

Linux Shell

- wiki.bash-hackers.org

- Reference Cards tldp.org/LDP

- How to handle white spaces Explains white spacing, eval and how to deal with building complex commands in scripts

Syntax checker

Common tool is to use shellchecker. Here below it's a reference how to install stable version on Ubuntu

sudo apt install xz-utils #pre req

export scversion="stable" # or "v0.4.7", or "latest"

wget "https://storage.googleapis.com/shellcheck/shellcheck-${scversion}.linux.x86_64.tar.xz"

tar --xz -xvf shellcheck-"${scversion}".linux.x86_64.tar.xz

cp shellcheck-"${scversion}"/shellcheck /usr/bin/

shellcheck --version

which and whereis

which pwd #returns binary path only whereis pwd #returns binary path and paths to man pages

dirname, basename, $0

#/bin/bash PRG=$0 #relative path with program name BASENAME=$(basename $0) #strip directory and suffix from filenames, here it's own script name DIRNAME=$(dirname $0) #strip last component from file name, returns relative path to the script printf "PRG=$0 --> computed full path: $PRG\n" printf "BASENAME=\$(basename \$0) --> computed name of script: $BASENAME\n" printf "DIRNAME=\$(dirname \$0) --> computed dir of script: $DIRNAME\n"

standard date timestamp for a filename

echo $(date +"%Y%m%dT%H%M") #20180618T1136 echo $(date +"%Y%m%d-%H%M") #20180618-1136

Variables scope

- Environment variable - defined in ENV of a shell

- Script Global Scope variable - defined within a script

- Function local variable - defined within a function and will become available/defined in Global Scope only after the function has been called

file descriptors

File descriptors are numbers, refereed also as a numbered streams, the first three FDs are reserved by system:

0 - STDIN, 1 - STDOUT, 2 - STDERR

echo "Enter a file name to read: "; read FILE exec 5<>$FILE #open/assign a file for '>' writing and reading '<' while read -r SUPERHERO; do #-r read a file echo "Superhero Name: $SUPERHERO" done <&5 #'&' describes that '5' is a FD, reads the file and redirects into while loop echo "File Was Read On: `date`" >&5 #write date to the file exec 5>&- #close '-' FD and close out all connections to it

Remove empty lines

cat blanks.txt | awk NF # [1] cat blanks.txt | grep -v '^[[:blank:]]*$' # [2] grep -e "[[:blank:]]" blanks

- [1] The command

awk NFis shorthand forawk 'NF != 0', or 'print those lines where the number of fields is not zero': - [2] The POSIX character class [:blank:] includes the whitespace and tab characters. The asterisk (*) after the class means 'any number of occurrences of that expression'. The initial caret (^) means that the character class begins the line. The dollar sign ($) means that any number of occurrences of the character class also finish the line, with no additional characters in between.

References

Here-doc - cat redirection to create a file

Example of how to use heredoc and redirect STDOUT to create a file

cat << EOF > hosts.txt wp.pl bbc.co.uk google.com EOF

String manipulation

String manipulation

#strips out the longest match (%%) from end of string (percentage), [0-9]* regex matching any digit

vpc=dev02; ${vpc%%[0-9]*}

#Strip end of string '.aws.company.*'; file globing allowed or ".* " is a regEx unsure

host="box-1.prod1.midw.prod20.aws.company.cloud"; echo ${host%.aws.company.*}

#Strip string from the beginning of matching pattern of '*' anything and <space>

host="box-1.company.cloud 22"; echo ${host##* } # -> 22

#Strip from the back %% (longest match) of matching pattern <space> and '*' anything (fileglob )

host="box-1.company.cloud 22"; echo ${host%% *} # -> box-1.company.cloud

Default value and parameter substitution

PASSWORD="${1:-data123}" #assign $1 arg if exists and is not zero length, otherwise expand to default value "data123" string

PASSWORD="${1:=data123}" #if $1 is not set or is empty, evaluate expression to "data123"

${var:=$DEFAULT}

tr - translate

Replaces/translates one set of characters from STDIN to how are they look in STDOUT; eg: char classes are:

echo "Who is the standard text editor?" |tr [:lower:] [:upper:] echo 'ed, of course!' |tr -d aeiou #-d deletes characters from a string echo 'The ed utility is the standard text editor.' |tr -s astu ' ' # translates characters into spaces echo ‘extra tabs – 2’ | tr -s [:blank:] #supress white spaces tr -d '\r' < dosfile.txt > unixfile.txt #remove carriage returns <CR>

Continue

https://www.ibm.com/developerworks/aix/library/au-unixtext/index.html Next colrm

paste

Paste can join 2 files side by side to provide a horizontal concatenation.

Function with a parameter

Parameters are passed to functions in the same manner as to scripts using $1, $2, $3 positional parameters and using them inside the function.

funcLastLoginInDays () {

echo "$USERNAME - logged in $1 days ago"

}

#eg. of calling a function with a parameter

funcLastLoginInDay $USERNAME

References

- parameter-substitution tldp.org/LDP

- unix.stackexchange.com

getops - parameters

while getopts ":k:h" opt; do allows you to pass parameters into a script

: - first colon has special meaning k: - parameter k requires argument value to be passed on because ':' follows 'k' h - parameter h does not require a value to be passed on

set options

set -o noclobber # > prevents overriding a file if already exists set +o noclobber # enable clobber back on

short version of checking command line parameters

Checking 3 positional parameters. Run with: ./check one

#!/bin/bash

: ${3?"Usage: $1 ARGUMENT $2 ARGUMENT $3 ARGUMENT"}

IFS and delimiting

$IFS Internal Field Separator is an environment variable defining default delimiter. It can be changed at any time in a global scope of within subshell by assigning a new value, eg:

echo $IFS # displays the current delimiter, by default it's 'space' $IFS=',' #changes delimiter into ',' coma

Counters

These counters do not require invocation, they always start from 1.

#!/bin/bash COUNTER=0 #defined only to satisfy while test function; otherwise exception [: -lt: unary operator expected while [ $COUNTER -lt 5 ]; do (( COUNTER++ )) # COUNTER=$((COUNTER+1)) # let COUNTER++ echo $COUNTER done

Loops

Bash shell - for i loop

for I in {1..10}; do echo $I; done

for I in 1 2 3 4 5 6 7 8 9 10; do echo $I; done

for I in $(seq 1 10); do echo $I; done

for ((I=1; I <= 10 ; I++)); do echo $I; done

for host in $(cat hosts.txt); do ssh "$host" "$command" >"output.$host"; done a01.prod.com a02.prod.com for host in $(cat hosts.txt); do ssh "$host" 'hostname; grep Certificate /etc/httpd/conf.d/*; echo ====='; done

Dash shell - for i loop

#!/bin/dash wso2List="esb dss am mb das greg" #by default white space separates items in the list structures for i in $wso2List; do echo "Product: $i" done

Bash shell - while loop

while true; do tail /etc/passwd; sleep 2; clear; done

| Read a file | Read a variable |

|---|---|

FILE="hostlist.txt" #a list of hostnames, 1 host per line # optional: IFS= -r while IFS= read -r LINE; do (( COUNTER++ )) echo "Line: $COUNTER - Host_name: $LINE" done < "$FILE" #redirect the file content into loop |

PLACES='Warsaw London' while read LINE; do (( COUNTER++ )) echo "Line: $COUNTER - Line_content: $LINE" done <<< "$PLACES" #inject multi-line string variable, ref. heredoc |

-roption passed to read command prevents backslash escapes from being interpretedIFS=option before read command to prevent leading/trailing whitespace from being trimmed -

Loop until counter expression is true

while [ $COUNT -le $DISPLAYNUMBER ]; do

echo "Hello World - $COUNT"

COUNT="$(expr $COUNT + 1)"

done

Arrays

hostlist=("am-mgr-1" "am-wkr-1" "am-wkr-2" "esb-mgr-1") #array that is actually just a list data type

for INDEX in ${hostlist[@]}; do #@ expands to every element in the list

printf "${hostlist[INDEX]}\n" #* expands to match any string, so it matches every element as well

done

Associative arrays, key=value pairs

#!/bin/bash

ec2type () { #function declaration

declare -A array=( #associative array declaration, bash 4

["c1.medium"]="1.7 GiB,2 vCPUs" #double quotation is required

["c1.xlarge"]="7.0 GiB,8 vCPUs"

["c3.2xlarge"]="15.0 GiB,8 vCPUs"

)

echo -e "${array[$1]:-NA,NA}" #lookups for a value $1 (eg. c1.medium) in the array,

} #if nothing found (:-) expands to default "NA,NA" string

ec2type $1 #invoke the function passing 1st argument received from a command line

### Usage

$ ./ec2types.sh c1.medium

1.7 GiB,2 vCPUs

if statement

if [ "$VALUE" -eq 1 ] || [ "$VALUE" -eq 5 ] && [ "$VALUE" -gt 4 ]; then echo "Hello True" elif [ "$VALUE" -eg 7 ] 2>/dev/null; then echo "Hello 7" else echo "False" fi

case statement

case $MENUCHOICE in

1)

echo "Good choice!"

;; #end of that case statements so it does not loop infinite

2)

echo "Better choice"

;;

*)

echo "Help: wrong choice";;

esac

traps

First argument is a command to execute, followed by events to be trapped

trap 'echo "Press Q to exit"' SIGINT SIGTERM trap 'funcMyExit' EXIT #run a function on script exit

debug mode

You can enable debugging mode anywhere in your scrip and disable many times

set -x #starts debug echo "Command to debug" set +x #stops debug

Or debug in sub-shell by running with -x option eg.

$ bash -x ./script.sh

Additionally you can disable globing with -f

Create a big file

Use dd tool that will create a file of size count*bs bytes

- 1024 bytes * 1024 count = 1048576 Bytes = 1Mb

- 1024 bytes * 10240 count = 10Mb

- 1024 bytes * 102400 count = 100Mb

- 1024 bytes * 1024000 count = 1G

dd if=/dev/zero bs=1024 count=10240 of=/tmp/10mb.zero #creates 10MB zero'd dd if=/dev/urandom bs=1048576 count=100 of=/tmp/100mb.bin #creates 100MB random file dd if=/dev/urandom bs=1048576 count=1000 of=1G.bin status=progress

dd in GNU Coreutils 8.24+ (Ubuntu 16.04 and newer) got a new status option to display the progress:

dd if=/dev/zero bs=1048576 count=1000 of=1G.bin status=progress 1004535808 bytes (1.0 GB, 958 MiB) copied, 6.01483 s, 167 MB/s 1000+0 records in 1000+0 records out 1048576000 bytes (1.0 GB, 1000 MiB) copied, 15.4451 s, 67.9 MB/s

Colours

red="\e[31m"

red_bold="\e[1;31m" #1; makes this bold

blue_bold="\e[1;34m"

green_bold="\e[1;32m"

light_yellow="\e[93m"

reset="\e[0m"

echo -e "${green_bold}Hello world!${reset}"

Colours function using a terminal settings rather than ANSI color control sequences

if test -t 1; then # if terminal

ncolors=$(which tput > /dev/null && tput colors) # supports color

if test -n "$ncolors" && test $ncolors -ge 8; then

termcols=$(tput cols)

bold="$(tput bold)"

underline="$(tput smul)"

standout="$(tput smso)"

normal="$(tput sgr0)" #reset to default font

black="$(tput setaf 0)"

red="$(tput setaf 1)"

green="$(tput setaf 2)"

yellow="$(tput setaf 3)"

blue="$(tput setaf 4)"

magenta="$(tput setaf 5)"

cyan="$(tput setaf 6)"

white="$(tput setaf 7)"

fi

fi

How to use it's the same as using ANSI color control sequences. Use ${colorName}Text${normal}

print_bold() {

title="$1"

text="$2"

echo "${red}================================${normal}"

echo -e " ${bold}${yellow}${title}${normal}"

echo -en " ${text}"

echo "${red}================================${normal}"

}

References

- Bash colours ANSI/VT100 Control sequences

Run scripts from website

The script available via http/s as usual needs begin with shebang and can be executed in fly without a need to download to a file system using cURL or wget.

curl -sL https://raw.githubusercontent.com/pio2pio/project/master/setup_sh | sudo -E bash - wget -qO- https://raw.githubusercontent.com/pio2pio/project/master/setup_sh | sudo -E bash -

Run local scripts remotly

Use the -s option, which forces bash (or any POSIX-compatible shell) to read its command from standard input, rather than from a file named by the first positional argument. All arguments are treated as parameters to the script instead. If you wish to use option parameters like eg. --silent true make sure you put -- before arg so it is interpreted as an argument to test.sh instead of bash.

ssh user@remote-addr 'bash -s arg' < test.sh ssh user@remote-addr bash -sx -- -p am -c /tmp/conf < ./support/scripts/sanitizer.sh # -- signals the end of options and disables further option processing. # any arguments after the -- are treated as filenames and arguments # -p am -c /tmp/conf -these are arguments passed onto the sanitizer.sh script

References

Scripts - solutions

Check connectivity

#!/bin/bash

red="\e[31m"; green="\e[32m"; blue="\e[34m"; light_yellow="\e[93m"; reset="\e[0m"

if [ "$1" != "onprem" ]; then

echo -e "${blue}Connectivity test to servers: on-prem${reset}"

declare -a hosts=(

wp.pl

rzeczpospolita.pl

)

else

echo -e "${blue}Connectivity test to Polish news servers: polish-news${reset}"

declare -a hosts=(

wp.pl

rzeczpospolita.pl

)

fi

#################################################

echo -e "From server: ${blue}$(hostname -f) $(hostname -I)${reset}"

for ((i = 0; i < ${#hosts[@]}; ++i)); do

RET=$(timeout 3 nc -z ${hosts[$i]} 22 2>&1) \

&& echo -e "[OK] $i ${hosts[$i]}" \

|| echo -e "${red}[ER] $i ${hosts[$i]}\t[ERROR: \

$(if [ $? -eq 124 ]; then echo "timeout maybe filtered traffic"; else \

if [ -z "$RET" ]; then echo "unknown"; else echo -e "$RET"; fi; fi)]${reset}"

sleep 0.3

done

# Working as well

#for host in "${hosts[@]}"; do

# RET=$(timeout 3 nc -z ${host} 22 2>&1) && echo -e "[OK] ${host}" || echo -e "${red}[ER] ${host}\t[ERROR: \

# $(if [ $? -eq 124 ]; then echo "timeout maybe filtered traffic"; else \

# if [ -z "$RET" ]; then echo "unknown"; else echo -e "$RET"; fi; fi)]${reset}"

# sleep 0.3

#done

LOCAL UBTUNU MIRROR

rsync -a --progress rysnc://archive.ubuntu.com/ubuntu /opt/mirror/ubuntu - command to create local mirror. ls /opt/mirror/ubuntu - shows all files

Partitions / Raid / LVM etc

Disk Commands

df -h- shows disk spacesudo fdisk -l- shows hard drive partitionsls -l /dev/sd*- SHOWS ALL DRIVEScat /proc/mdstat- SHOWS LINUX RAID DRIVES IN USEpvdisplay- SHOWS PHYSICAL VOLUMESlvdisplay- SHOW LOGICAL VOLUM DISPLAY

Provisioning Filesystems (Extra Storage)

Provision storage while sudoserver is online.

fdisk -l- reveals connected disks and partitionsdf -h- shows amount of memory used/dev/sdb- unpartitionedmklabel- type MSDOs - if needed.sudo parted- partition toolselect /dev/sdb- selects disk -mkpart primary 1 10GB- 10GB partitionprint- shows disks in partedquit- to leave parted

mke2fs- overlay filesystem on new partition.mke2fs -t ext4 -j /dev/sdb1- creates file system.

or

sudo mkfs.ext4 -j /dev/sdb1/- same as above.

mount /dev/sdb1 /projectx/10gb.- create mount pointmount- shows all system mounts.sudo blkid- shows partition uuid for stab.sudo nano /etc/fstab- edits stab.UUID="number" /projectx/10GB ext4 defaults- stores in fstab.

Provision SWAP storage on demand

Ability for kernel to extend RAM via disk.

free -m- determines current stare of storage.top- also shows SWAP info.

sudo fdisk -l- to identify partition spaceparted /dev/sdb- places in context of /dev/sdv/print- to show partition table.mkpart primary linux-swap 10GB 12GBstarting from the 10GB first block moving up.set 2 swap on- turns partitions to swap and on.sudo fdisk -l /dev/sdb- confirms swap allocation.sudo mkswap /dev/sdb2- overlays SWAP filesystem and displays UUID (FSTAB)sudo blkid- shows all UUID'ssudo nano /etc/fstab- opens stab for editing for SWAP reference using UUID.UUID "" NONE swap sw 0 0- for nano file.swap on -s- displays current swap situationsudo swap on -a- turns on swap storage.free -m || top || swapon -s- to confirm configuration.

Option SWAP creation which is file based

dd if=/dev/zero of=/projectx/10GB/swapfile3GB count=3G bs=1024- creates dummy swap file.sudo mkswap /projectx/10GB/swapfile3GB- overlays SWAP file system.sudo nano /etc/fstab- opens stab for editing for SWAP reference using path /projectx/10GB/swapfile3GBsudo swapon -a- tuns on all swap storage.

Storage Management LVM (Logical Volume Management)

Volume sets based on various disparate storage technologies.

Common configuration - raid hardware (redundancy) / LVM overlaying RAID config (aggregation)

Ability to extend, reduce, manipulate storage on demand.

LVM storage hierarchy:

Volume Group (Consists of 1 or more physical volumes)

- Logical Volume(s)

- File System(s)

6 steps to LVM setup

Appropirate 1 or more LVM partitions

sudo parted /dev/sdbmkpart extended 13GB 20GBprintmkpart logical lvm 13GB 20GBselect /dev/sdc/

mklabel msdos- mkpart primary 1GB 20GB

set 1 lvm onprint

20GB on SDC and 7GB on SDB

Partition

sudo pvcreate /dev/sdb5 /dev/sdc1- allocates LVM partitions as physical volumessudo pvdisplay- shows LVM physical volumes.sudo vgcreate volgroup001 /dev/sdb5 /dev/sdc1- aggregates volumes to volume groupsudo lvcreate -L 200GB volgroup001 -n logvol001- creates logical volumessudo lvdisplay- shows logical volumessudo mk3fs -t ext -j /dev/volgroup001/logvol001- overlays EXT4 filesytem.Mount filesystem and commit changes to fstab

sudo lvrename volgroup001 logvol001 volgroup002- renames volume group. (Remember to edit fstab file or unmount)sudo lvresize -L 25GB /dev/volgroup001/logvolvar- Resize logical volume by 5GB from 20GBsudo resize2fs /dev/mapper/volgroup001-logvolvar 25G- Resize filesystem after increasing memory.sudo lvremove /dev/volgroup001/logvolvar- Remove volume completely. (Umount first)sudo parted /dev/sdc- Add or assign more partitions to volume group LVM.printmkpart primary 20GB 25GBprintset 2 lvm onprintsudo pvcreate /dev/sdc2sudo pvdisplaysudo vgextend volgroup001 /dev/sdc2- add new PV to volume group

Bash

Delete key gives ~ ? Add the following line to your $HOME/.inputrc (might not work if added to /etc/inputrc )

"\e[3~": delete-char