Difference between revisions of "Kubernetes/Security and RBAC"

| Line 415: | Line 415: | ||

# Get pods with labels | # Get pods with labels | ||

kubectl -n dev get pods -owide --show-labels | kubectl -n dev get pods -owide --show-labels | ||

NAMESPACE | NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES LABELS | ||

dev | dev pod/busybox1 1/1 Running 0 34m 10.15.185.121 minikube <none> <none> run=busybox1 | ||

dev | dev pod/busybox2 1/1 Running 0 34m 10.15.171.50 minikube <none> <none> run=busybox2 | ||

dev | dev pod/busybox3 1/1 Running 0 31m 10.15.140.94 minikube <none> <none> app=A | ||

dev | dev pod/busybox4 1/1 Running 0 18s 10.15.174.22 minikube <none> <none> app=B | ||

dev | dev pod/nginx1 1/1 Running 0 27m 10.15.19.151 minikube <none> <none> run=nginx1 | ||

dev | dev pod/nginx2 1/1 Running 0 27m 10.15.104.113 minikube <none> <none> run=nginx2 | ||

# Ping, should should timeout because NetworkPolicy in place | # Ping, should should timeout because NetworkPolicy in place | ||

Revision as of 00:44, 21 August 2019

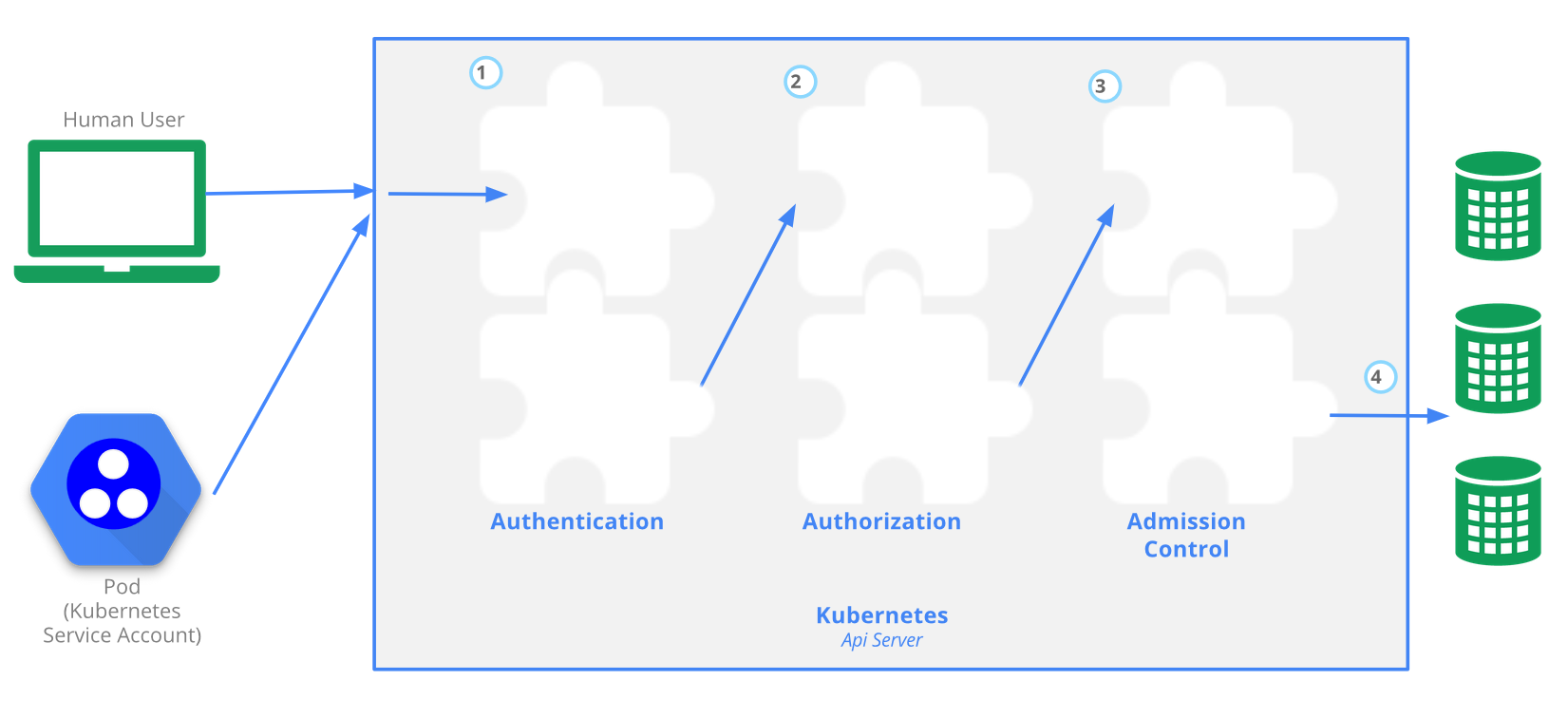

API Server and Role Base Access Control

Once the API server has determined who you are (whether a pod or a user), the authorization is handled by RBAC.

To prevent unauthorized users from modifying the cluster state, RBAC is used by defining roles and role bindings for a user. A service account resource is created for a pod to determine what control has over the cluster state. For example, the default service account will not allow you to list the services in a namespace.

The Kubernetes API server provides CRUD actions (Create, Read, Update, Delete) interface for interacting with cluster state over a RESTful API. API calls can come only from 2 sources:

- kubectl

- POD

There is 4 stage process

- Authentication

- Authorization

- Admission

- Writing the configuration state CRUD actions to persistent store etcd database

Example plugins:

- serviceaccount plugin applies default serviceaccount to pods that don't explicitly specify

RBAC is managed by 4 resources, divided over 2 groups

| Group-1 namespace resources | Group-2 cluster level resources | resources type |

|---|---|---|

| roles | cluster roles | defines what can be done |

| role bindings | cluster role bindings | defines who can do it |

When deploying a pod a default serviceaccount is assigned if not specified in the pod manifest. The serviceaccount represents an identity of an app running on a pod. Token file holds authentication token. Let's create a namespace and create a test pod to try to list available services.

kubectl create ns rbac kubectl run apitest --image=nginx -n rbac #create test container, to run API call test from

Each pod has serviceaccount, the API authentication token is on a pod. When a pod makes API call uses the token, this allows to assumes the serviceaccount, so it gets identity. You can preview the token on the pod.

kubectl -n rbac1 exec -it apitest-<UID> -- /bin/sh #connect to the container shell

#display token and namespace that allows to connect to API server from this pod

root$ cat /var/run/secrets/kubernetes.io/serviceaccount/{token,namespace}

#call API server to list K8s services in 'rbac' namespace

root$ curl localhost:8001/api/v1/namespaces/rbac/services

List all serviceaccounts. Serviceaccounts can only be used within the same namespace.

kubectl get serviceaccounts -n rbac kubectl get secrets NAME TYPE DATA AGE default-token-qqzc7 kubernetes.io/service-account-token 3 39h kubectl get secrets default-token-qqzc7 -o yaml #display secrets

ServiceAccount

The API server is first evaluating if the request is coming from a service account or a normal user /or normal user account meeting, a private key, a user store or even a file with a list of user names and passwords. Kubernetes doesn't have objects that represent normal user accounts, and normal users cannot be added to the cluster through.

kubectl get serviceaccounts #or 'sa' in short kubectl create serviceaccount jenkins kubectl get serviceaccounts jenkins -o yaml apiVersion: v1 kind: ServiceAccount metadata: creationTimestamp: "2019-08-05T07:10:40Z" name: jenkins namespace: default resourceVersion: "678" selfLink: /api/v1/namespaces/default/serviceaccounts/jenkins uid: 21cba4bb-b750-11e9-86b3-0800274143a9 secrets: - name: jenkins-token-cspjm kubectl get secret [secret_name]

Assign ServiceAccoubt to a pod

apiVersion: v1

kind: Pod

metadata:

name: busybox

namespace: default

spec:

serviceAccountName: jenkins #<-- ServiceAccount

containers:

- image: busybox:1.28.4

command:

- sleep

- "3600"

imagePullPolicy: IfNotPresent

name: busybox

restartPolicy: Always

#Verify

kubectl.exe get pods -o yaml | sls serviceAccount

{"apiVersion":"v1","kind":"Pod","metadata":{"annotations":{},"name":"busybox","namespace":"default"},"spec":{"c

ontainers":[{"command":["sleep","3600"],"image":"busybox:1.28.4","imagePullPolicy":"IfNotPresent","name":"busybox"}],"r

estartPolicy":"Always","serviceAccountName":"jenkins"}}

- mountPath: /var/run/secrets/kubernetes.io/serviceaccount

serviceAccount: jenkins

serviceAccountName: jenkins

Create Administrative account

This is a process of setting up a new remote administrator.

kubectl.exe config set-credentials piotr --username=piotr --password=password

#new section in ~/.kube/config has been added:

users:

- name: user1

...

- name: piotr

user:

password: password

username: piotr

#create clusterrolebinding, this is for authonomus users not-recommended

kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system:anonymous

clusterrolebinding.rbac.authorization.k8s.io/cluster-system-anonymous created

#copy server ca.crt

laptop$ scp ubuntu@k8s-cluster.acme.com:/etc/kubernetes/pki/ca.crt .

#set kubeconfig

kubectl config set-cluster kubernetes --server=https://k8s-cluster.acme.com:6443 --certificate-authority=ca.crt --embed-certs=true

#Create context

kubectl config set-context kubernetes --cluster=kubernetes --user=piotr --namespace=default

#Use contect to current

kubectl config use-context kubernetes

Create a role (namespaced permissions)

The role describes what actions can be performed. This role allows to list services from a web namespace.

apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: namespace: web #this need to be created beforehand name: service-reader rules: - apiGroups: [""] verbs: ["get", "list"] resources: ["services"]

The role does not specify who can do it. Thus we create a roleBinding with a user, serviceAccount or group. The roleBinding can only reference a single role, but can bind to multi: users, serviceAccounts or groups

kubectl create rolebinding roleBinding-test --role=service-reader --serviceaccount=web:default -n web # Verify access has been granted curl localhost:8001/api/v1/namespaces/web/services

Create a clusterrole (cluster-wide permissions)

In this example we create ClusterRole that can access persitenvolumes APIs, then we will create ClusterRolebinding (pv-test) with a default ServiceAccount (name: default) in 'web' namespace. The SA is a account that pod assumes/uses by default when getting Authenticated by API-server. When we then attach to the container and try to list cluster-wide resource - persitenvolumes , this will be allowed because of ClusterRole, that the pod has assumed.

# Create a ClusterRole to access PersistentVolumes: kubectl create clusterrole pv-reader --verb=get,list --resource=persistentvolumes # Create a ClusterRoleBinding for the cluster role: kubectl create clusterrolebinding pv-test --clusterrole=pv-reader --serviceaccount=web:default

The YAML for a pod that includes a curl and proxy container:

apiVersion: v1

kind: Pod

metadata:

name: curlpod

namespace: web

spec:

containers:

- image: tutum/curl

command: ["sleep", "9999999"]

name: main

- image: linuxacademycontent/kubectl-proxy

name: proxy

restartPolicy: Always

Create the pod that will allow you to curl directly from the container:

kubectl apply -f curlpod.yaml

kubectl get pods -n web # Get the pods in the web namespace

kubectl exec -it curlpod -n web -- sh # Open a shell to the container:

#Verify you can access PersistentVolumes (cluster-level) from the pod

/ # curl localhost:8001/api/v1/persistentvolumes

{

"kind": "PersistentVolumeList",

"apiVersion": "v1",

"metadata": {

"selfLink": "/api/v1/persistentvolumes",

"resourceVersion": "7173"

},

"items": []

}/ #

List all API resources

PS C:> kubectl.exe proxy Starting to serve on 127.0.0.1:8001

Network policies

Network policies allow you to specify which pods can talk to other pods. The example Calico's plugin allows for securing communication by:

- applying network policy based on:

- pod label-selector

- namespace label-selector

- CIDR block range

- securing communication (who can access pods) by setting up:

- ingress rules

- egress rules

POSTing any NetworkPolicy manifest to the API server will have no effect unless your chosen networking solution supports network policy. Network Policy is just an API resource that defines a set of rules for Pod access. However, to enable a network policy, we need a network plugin that supports it. We have a few options:

- Calico, Cilium, Kube-router, Romana, Weave Net

minikube

If you plan to use Minikube with its default settings, the NetworkPolicy resources will have no effect due to the absence of a network plugin and you’ll have to start it with --network-plugin=cni.

minikube start --network-plugin=cni --memory=4096

- Minikube local cluster with NetworkPolicy powered by Cilium

- network-policies on your laptop by banzaicloud

Install Calico network policies

What is the Canal? Tigera and CoreOS’s was a project to integrate Calico and flannel, read more...

Install Calico =<v3.5 canal network policies plugin:

wget -O canal.yaml https://docs.projectcalico.org/v3.5/getting-started/kubernetes/installation/hosted/canal/canal.yaml curl https://docs.projectcalico.org/v3.8/manifests/canal.yaml -O curl https://docs.projectcalico.org/v3.8/manifests/calico-policy-only.yaml -O # Update Pod IPs to '--cluster-cidr', changing this value after installation has no affect ## Get Pod's cidr kubectl cluster-info dump | grep -m 1 service-cluster-ip-range kubectl cluster-info dump | grep -m 1 cluster-cidr ## Minikube cidr can change, so exec to Pod is best option eventually you can check in minikube config grep ~/.minikube/profiles/minikube/config.json | grep ServiceCIDR ## Update config POD_CIDR="<your-pod-cidr>" sed -i -e "s?10.244.0.0/16?$POD_CIDR?g" canal.yaml kubectl apply -f canal.yaml

Cilium - networkPolicies

Cilium DaemonSet will place one Pod per node. Each Pod then will enforce network policies on the traffic using Berkeley Packet Filter (BPF).

minikube start --network-plugin=cni --memory=4096 #--kubernetes-version=1.13 kubectl create -f https://raw.githubusercontent.com/cilium/cilium/v1.5/examples/kubernetes/1.14/cilium-minikube.yaml

Create NetworkPolicy

Create a 'default' isolation policy for a namespace by creating a NetworkPolicy that selects all pods but does not allow any ingress traffic to those pods. The example we will run in dev namespace.

Create a namespace

kubectl create ns dev

| Default deny all ingress traffic | Default allow all ingress traffic |

|---|---|

cat > deny-all-ingress.yaml << EOF

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: deny-all-ingress

# namespace: dev

spec:

podSelector: {} # select all pods in a ns

ingress: # rules, if empty no traffic allowed

policyTypes:

- Ingress

EOF

|

cat > allow-all-ingress.yaml << EOF

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: allow-all-ingress

# namespace: dev

spec:

podSelector: {}

ingress: # rules to apply to selected nodes

- {} # rule: all allowed

policyTypes:

- Ingress # rule types that the NetworkPolicy relates to

EOF

|

Create NetworkPolicy

$ kubectl.exe apply -f deny-all-ingress.yaml

networkpolicy.networking.k8s.io/deny-all-ingress created

$ kubectl get networkPolicy -A

NAMESPACE NAME POD-SELECTOR AGE

default deny-all-ingress <none> 6s

$ kubectl describe networkPolicy deny-all-ingress

Name: deny-all-ingress

Namespace: dev

Created on: 2019-08-20 22:31:30 +0100 BST

Labels: <none>

Annotations: kubectl.kubernetes.io/last-applied-configuration:

{"apiVersion":"networking.k8s.io/v1","kind":"NetworkPolicy","metadata":{"annotations":{},"name":"deny-all-ingress","namespace":"dev"},"spe...

Spec:

PodSelector: <none> (Allowing the specific traffic to all pods in this namespace)

Allowing ingress traffic:

<none> (Selected pods are isolated for ingress connectivity)

Allowing egress traffic:

<none> (Selected pods are isolated for egress connectivity)

Policy Types: Ingress

Run test pod

# Single Pods in -n --namespace kubectl -n dev run --generator=run-pod/v1 busybox1 --image=busybox -- sleep 3600 kubectl -n dev run --generator=run-pod/v1 busybox2 --image=busybox -- sleep 3600 # default labels: --labels="run=busybox1" kubectl -n dev run --generator=run-pod/v1 busybox3 --image=busybox --labels="app=A" -- sleep 3600 kubectl -n dev run --generator=run-pod/v1 busybox4 --image=busybox --labels="app=B" -- sleep 3600 # Get pods with labels kubectl -n dev get pods -owide --show-labels NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES LABELS dev pod/busybox1 1/1 Running 0 34m 10.15.185.121 minikube <none> <none> run=busybox1 dev pod/busybox2 1/1 Running 0 34m 10.15.171.50 minikube <none> <none> run=busybox2 dev pod/busybox3 1/1 Running 0 31m 10.15.140.94 minikube <none> <none> app=A dev pod/busybox4 1/1 Running 0 18s 10.15.174.22 minikube <none> <none> app=B dev pod/nginx1 1/1 Running 0 27m 10.15.19.151 minikube <none> <none> run=nginx1 dev pod/nginx2 1/1 Running 0 27m 10.15.104.113 minikube <none> <none> run=nginx2 # Ping, should should timeout because NetworkPolicy in place kubectl exec -ti busybox1 -- ping -c3 10.15.171.50 #<busybox2-ip> PING 10.15.171.50 (10.15.171.50): 56 data bytes --- 10.15.171.50 ping statistics --- 3 packets transmitted, 0 packets received, 100% packet loss

- Note

- If you wish to use dns names eg. busybox2 it requires to create a service, without you can't find names:

kubectl -n dev exec -ti busybox1 -- nslookup busybox2 Server: 10.96.0.10 Address: 10.96.0.10:53 ** server can't find busybox2.dev.svc.cluster.local: NXDOMAIN *** Can't find busybox2.svc.cluster.local: No answer *** Can't find busybox2.cluster.local: No answer *** Can't find busybox2.dev.svc.cluster.local: No answer *** Can't find busybox2.svc.cluster.local: No answer *** Can't find busybox2.cluster.local: No answer # Try nginx, but the container does not have ping,curl just whet --spider <dns|ip> kubectl.exe -n dev run --generator=run-pod/v1 nginx1 --image=nginx kubectl.exe -n dev run --generator=run-pod/v1 nginx2 --image=nginx kubectl.exe -n dev expose pod nginx1 --port=80 kubectl -n dev get services NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR LABELS dev service/nginx1 ClusterIP 10.97.69.143 <none> 80/TCP 22m run=nginx1 run=nginx1 # Call by dns, fqdn: nginx1.dev.svc.cluster.local kubectl.exe -n dev exec -ti busybox1 -- /bin/wget --spider http://nginx1 Connecting to nginx1 (10.97.69.143:80) remote file exists

Create allow-A-to-B.yaml policy

cat > allow-A-to-B.yaml << EOF

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: allow-a-to-b #must be lowercase req.DNS-1123

# namespace: dev

spec:

podSelector: {}

ingress:

- from:

- podSelector:

matchLabels:

app: A

egress:

- to:

- podSelector:

matchLabels:

app: B

policyTypes:

- Ingress

- Egress

EOF

kubectl -n dev create -f allow-A-to-B.yaml

networkpolicy.networking.k8s.io/allow-out-to-in created

Test allow policy

kubectl -n dev exec -ti busybox1 -- ping -c3 10.15.171.50 # fail kubectl -n dev exec -ti busybox3 -- ping -c3 10.15.174.22 # success # Apply labels from: A to: B kubectl -n dev label pod busybox1 app=A kubectl -n dev label pod busybox2 app=B kubectl -n dev exec -ti busybox1 -- ping -c3 10.15.171.50 # success # Tidy up kubectl -n dev delete networkpolicy --all

- Deployment (optional test)

kubectl create deployment nginx --image=nginx # create a deployment

kubectl scale rs nginx-554b9c67f9 --replicas=3 # scale deployment

#kubectl run nginx --image=nginx --replicas=3 #deprecated command

kubectl expose deployment nginx --port=80

# Try accessing a service from another pod

kubectl run --generator=run-pod/v1 busybox --image=busybox -- sleep 3600

kubectl exec busybox -it -- /bin/sh #this often crashes

/ # wget --spider --timeout=1 nginx #this should timeout

#--spider does not download just browses

kubectl exec -ti busybox -- wget --spider --timeout=1 nginx

Create NetworkPolicy that allows ingress port 5432 from pods with 'web' label

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: web-netpolicy

spec:

podSelector: # apply policy

matchLabels: # to these pods

app: web

ingress:

- from:

- podSelector: # allow traffic

matchLabels: # from these pods

app: busybox

ports:

- port: 80

Label a pod to get the NetworkPolicy:

kubectl label pods [pod_name] app=db kubectl run busybox --rm -it --image=busybox /bin/sh #wget --spider --timeout=1 nginx #this should timeout

| namespace NetworkPolicy | IP block NetworkPolicy | egress NetworkPolicy |

|---|---|---|

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: ns-netpolicy

spec:

podSelector:

matchLabels:

app: db

ingress:

- from:

- namespaceSelector:

matchLabels:

tenant: web

ports:

- port: 5432

|

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: ipblock-netpolicy

spec:

podSelector:

matchLabels:

app: db

ingress:

- from:

- ipBlock:

cidr: 192.168.1.0/24

|

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: egress-netpol

spec:

podSelector:

matchLabels:

app: web

egress:

- to:

- podSelector:

matchLabels:

app: db

ports:

- port: 5432

|