Linux Storage Management - Logical Volume Manager - LVM

Rescan the SCSI Bus to Add a SCSI Device Without rebooting the VM

While your VM is powered on you can add the disks through Add Hardware... in Properties of Virtual Machine, the concurrent disks will be named virtual_disk-[1..2..3].vdk. In the example below I added two disks 3Gb - /dev/sdb and 4Gb - /dev/sdc

Then force to re-scan the SCSI bus, note host# is the bus number so you may need to trigger on all-buses host[0...2]

[root@vmcent7 ~]# echo "- - -" > /sys/class/scsi_host/host2/scan #rescan disks [root@vmcent7 ~]# fdisk -l Disk /dev/sda: 15.0 GB, 15032385536 bytes, 29360128 sectors <output ommitted> Device Boot Start End Blocks Id System /dev/sda1 * 2048 616447 307200 83 Linux /dev/sda2 616448 29360127 14371840 8e Linux LVM Disk /dev/mapper/centos-swap: 2097 MB, 2097152000 bytes, 4096000 sectors <output ommitted> Disk /dev/mapper/centos-root: 7369 MB, 7369392128 bytes, 14393344 sectors <output ommitted> Disk /dev/mapper/centos-home: 5242 MB, 5242880000 bytes, 10240000 sectors <output ommitted> Disk /dev/sdb: 3221 MB, 3221225472 bytes, 6291456 sectors <output ommitted> Disk /dev/sdc: 4294 MB, 4294967296 bytes, 8388608 sectors <output ommitted>

LVM - operation

LV1 LV2 LV3 LV - logical volumes of our size choice

/ \ / \ / \

/ \ / \ / \

[=============+=== VG - VOLUME GROUP =+=========+======] VG - volume group - total space available aggregated with PVs (physical volumes)

we can slice this as we wish

/ | \

/ | \

PV PV PV PV - physical volume - partitions added to LVM management than can be grouped

| | | into volume groups

| | |

sda1 sdb1 sdc1 partitions that span throughout multiple disks

Preview LVM Disk Storage in Linux

See the Physical Volume (PV), Volume Group (VG), Logical Volume (LV) by using following commands:

# pvs # vgs # lvs

Addressing logical-volumes | mapper

Using mapper path or direct vol_vg/log_vol is the same and does not affect anything.

/dev/vol_vg/log_vol /dev/mapper/log_vol

Creating LVM Disk Storage

Current status of LVM Volume Group, with 2 raw disks sdb sdc installed but not included in any of VGs.

[piotr@vmcentos7 ~]$ sudo vgdisplay

--- Volume group ---

VG Name centos #A Volume Group name

System ID

Format lvm2 #LVM Architecture Used LVM2

Metadata Areas 1

Metadata Sequence No 4

VG Access read/write #Volume Group is in Read and Write and ready to use

VG Status resizable #Volume Group can be re-sized, expanded if needed

MAX LV 0

Cur LV 3 #Currently there are 3 Logical Volumes in this Volume Group

Open LV 3

Max PV 0

Cur PV 1 #Currently Using Physical Disk was 1 (sda)

Act PV 1 #and its active, so what we can use this volume group

VG Size 13.70 GiB

PE Size 4.00 MiB #Physical Extends, Size for a disk can be defined using PE or GB size, 4MB is the Default PE size of LVM.

#Eg. to create 5 GB size of logical volume we can use sum of 1280

#Explanation: 1024MB = 1GB, if so 1024MB x 5 = 5120PE = 5GB, Now Divide the 5120/4 = 1280, 4 is the Default PE Size

Total PE 3508 #This Volume Group have

Alloc PE / Size 3507 / 13.70 GiB #Total PE Used, full PE already Used, 3507 x 4PE = 14028

Free PE / Size 1 / 4.00 MiB #Only 1 PE space left

VG UUID k1tp1W-8l9s-7unm-vu9g-gSBi-8Mdn-eu0Fnc

Create Logical Volumes

- BEFORE

[piotr@vmcentos7 ~]$ lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT fd0 2:0 1 4K 0 disk sda 8:0 0 14G 0 disk ├─sda1 8:1 0 300M 0 part /boot └─sda2 8:2 0 13.7G 0 part ├─centos-swap 253:0 0 2G 0 lvm [SWAP] ├─centos-root 253:1 0 6.9G 0 lvm / └─centos-home 253:2 0 4.9G 0 lvm /home sdb 8:16 0 3G 0 disk sdc 8:32 0 4G 0 disk sr0 11:0 1 6.6G 0 rom

sudo pvs PV VG Fmt Attr PSize PFree /dev/sda2 centos lvm2 a-- 13.70g 4.00m

- Create PVs by adding un-mounted disk or partition | Physical Volumes

PVs from sdb and sdc crated

[piotr@vmcentos7 ~]$ sudo pvcreate /dev/sdb /dev/sdc Physical volume "/dev/sdb" successfully created Physical volume "/dev/sdc" successfully created

We can see that both disks have been added to LVM manager, they do not belong to any Volume Group.

[piotr@vmcentos7 ~]$ sudo pvs PV VG Fmt Attr PSize PFree /dev/sda2 centos lvm2 a-- 13.70g 4.00m /dev/sdb lvm2 a-- 3.00g 3.00g /dev/sdc lvm2 a-- 4.00g 4.00g

- Create VGs | Volume Group

We add now both physical volumes to the Volume Group

[piotr@vmcentos7 ~]$ sudo vgcreate vg1 /dev/sdb /dev/sdc Volume group "vg1" successfully created

[piotr@vmcentos7 ~]$ sudo pvs PV VG Fmt Attr PSize PFree /dev/sda2 centos lvm2 a-- 13.70g 4.00m /dev/sdb vg1 lvm2 a-- 3.00g 3.00g #this PV belongs now to vg1 Volume Group /dev/sdc vg1 lvm2 a-- 4.00g 4.00g #this PV belongs now to vg1 Volume Groqup

[piotr@vmcentos7 ~]$ sudo vgs VG #PV #LV #SN Attr VSize VFree centos 1 3 0 wz--n- 13.70g 4.00m vg1 2 0 0 wz--n- 6.99g 6.99g #newly created Volume Group name vg1 with combined available space 6.99Gb

- Create LVs | Logical Volume(s)

We create Logical Volume of size 5GB named 'data' using space in Volume Group vg1. The available space in vg1 will decrease.

[piotr@vmcentos7 ~]$ sudo lvcreate --size 5G --name data vg1 Logical volume "data" created

[piotr@vmcentos7 ~]$ sudo lvs LV VG Attr LSize Pool Origin Data% Move Log Cpy%Sync Convert home centos -wi-ao---- 4.88g root centos -wi-ao---- 6.86g swap centos -wi-ao---- 1.95g data vg1 -wi-a----- 5.00g [piotr@vmcentos7 ~]$ sudo vgs VG #PV #LV #SN Attr VSize VFree centos 1 3 0 wz--n- 13.70g 4.00m vg1 2 1 0 wz--n- 6.99g 1.99g #VG available space decreased from 6.99Gb to 1.99Gb used 5G for LV named 'data' [piotr@vmcentos7 ~]$ sudo pvs PV VG Fmt Attr PSize PFree /dev/sda2 centos lvm2 a-- 13.70g 4.00m /dev/sdb vg1 lvm2 a-- 3.00g 1.99g /dev/sdc vg1 lvm2 a-- 4.00g 0 #LV named 'data' spanned beggining from sdc consuming all disk (PFree=0) space to partialy taking sdb space

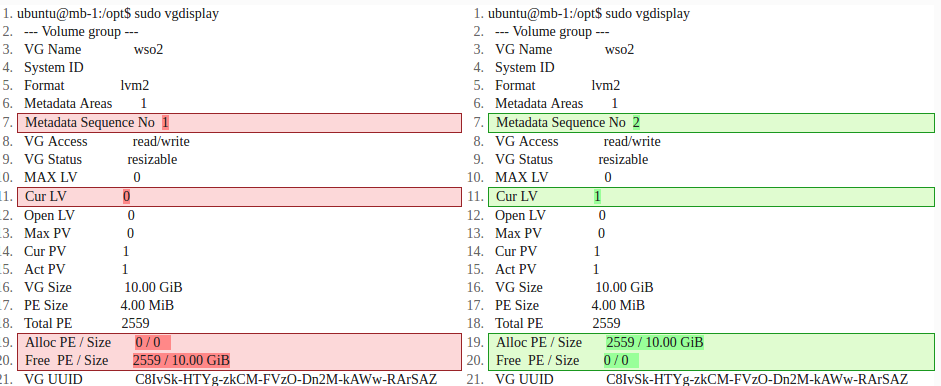

Please see the differences when first LV has been added to Volume Group. This is not example from the above.

Create mounting filesystem | format and mount

sudo mkfs.ext4 /dev/mapper/data-lv # typical path to LV volumes sudo mkfs.ext4 /dev/vg1/data # crate/format ext4 filesystem on LV 'data' logical-volume mkdir /mnt/data # create a mount point mount /path/to/lv /path/to/dir mount /dev/vg1/data /mnt/data # Add mount information to /etc/fstab UUID=UUID_NUMBER /mount/point fs_type defaults 0 0 [piotr@vmcentos7 ~]$ df -Th # BEFORE Filesystem Type Size Used Avail Use% Mounted on /dev/mapper/centos-root xfs 6.9G 1.2G 5.8G 17% / devtmpfs devtmpfs 909M 0 909M 0% /dev tmpfs tmpfs 918M 0 918M 0% /dev/shm tmpfs tmpfs 918M 8.6M 909M 1% /run tmpfs tmpfs 918M 0 918M 0% /sys/fs/cgroup /dev/sda1 ext2 291M 120M 153M 44% /boot /dev/mapper/centos-home ext4 4.7G 20M 4.5G 1% /home /dev/mapper/vg1-data ext4 4.8G 20M 4.6G 1% /mnt/data #LV data has been mounted [piotr@vmcentos7 ~]$ lsblk # AFTER NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT fd0 2:0 1 4K 0 disk sda 8:0 0 14G 0 disk ├─sda1 8:1 0 300M 0 part /boot └─sda2 8:2 0 13.7G 0 part

Increase the size of a Linux LVM by adding a new disk

This steps treat about extending LV by adding additional physical disk. All these steps are correct for Ubuntu 16.04

This is how it looks like before any changes just after insatlling /dev/sdb physical disk. List block devices

$ sudo lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT sda 8:0 0 238.5G 0 disk ├─sda1 8:1 0 487M 0 part /boot ├─sda2 8:2 0 1K 0 part └─sda5 8:5 0 238G 0 part ├─this--vg-root 252:0 0 222G 0 lvm / └─this--vg-swap_1 252:1 0 15.9G 0 lvm [SWAP] sdb 8:16 0 465.8G 0 disk

Convert whole physical disk into PV volume

$ sudo pvcreate /dev/sdb Physical volume "/dev/sdb" successfully created

Verify available PV volumes on the system. We can see new 465.76g disk added.

$ sudo pvs PV VG Fmt Attr PSize PFree /dev/sda5 this-vg lvm2 a-- 238.00g 48.00m /dev/sdb lvm2 --- 465.76g 465.76g

Verify available LV volumes on the system. The current size of root LV (Logica Volume) is 222.04g.

$ sudo lvs LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert root this-vg -wi-ao---- 222.04g swap_1 this-vg -wi-ao---- 15.91g

Extend VG (Volume Group) named "this-vg" by adding whole new PV disk /dev/sdb. Remember this will not extend the filesystem size. Think of it that extra disk has been added to the disks pool .

$ sudo vgextend this-vg /dev/sdb Volume group "this-vg" successfully extended

Verify that VG group has been extended. The output has been sanitised of unnecessary lines.

$ sudo vgdisplay --- Volume group --- VG Name this-vg Format lvm2 VG Access read/write VG Status resizable Cur LV 2 Open LV 2 Cur PV 2 Act PV 2 VG Size 703.75 GiB # sum of PVs: /dev/sdb (465.8G) + /dev/sda5 (238.00g) PE Size 4.00 MiB Total PE 180161 Alloc PE / Size 60915 / 237.95 GiB Free PE / Size 119246 / 465.80 GiB

Extend LV (Logical Volume) partition by adding whole the new PV disk. The filesystem won't get extended yet. Think of it as "unused space" not claimed by a filesystem yet.

$ sudo lvextend /dev/this-vg/root /dev/sdb Size of logical volume this-vg/root changed from 222.04 GiB (56843 extents) to 687.80 GiB (176077 extents). Logical volume root successfully resized. # Example: add specified size $ sudo lvextend -L +500M /dev/vol_grp/log_vol

Extend the filesystem

$ sudo umount /dev/this-vg/root # Run filesystem check e2fsck -f /dev/this-vg/root # Resize 'root' logical-volume $ sudo resize2fs /dev/this-vg/root resize2fs 1.42.13 (17-May-2015) Filesystem at /dev/this-vg/root is mounted on /; on-line resizing required old_desc_blocks = 14, new_desc_blocks = 43 The filesystem on /dev/this-vg/root is now 180302848 (4k) blocks long.

Now we can see that /dev/mapper/this--vg-root filesystem is 677G in size.

piotr@linux:~$ df -h Filesystem Size Used Avail Use% Mounted on udev 7.8G 0 7.8G 0% /dev tmpfs 1.6G 9.5M 1.6G 1% /run /dev/mapper/this--vg-root 677G 120G 528G 19% / tmpfs 7.8G 23M 7.8G 1% /dev/shm tmpfs 5.0M 4.0K 5.0M 1% /run/lock tmpfs 7.8G 0 7.8G 0% /sys/fs/cgroup /dev/sda1 472M 206M 242M 46% /boot tmpfs 1.6G 44K 1.6G 1% /run/user/1000

Troubleshooting

Check whether LVM service starts at the boot if not then LVs will not be recognised.

chkconfig -a boot.lvm #SysV services systemctl #systemd services, used in CentOS 7 systemctl list-unit-files

The LVs should be permanently added to /ect/fstab so will be mounted at the boot or with mount -a command.

LVM - increase swap space in Linux

I would like to demonstrate how to increase swap space in Linux VM without a reboot by adding SCSI disk then using LVM to present to OS.

At first add new SCSI disk using your favourite Hypervisor Management system (etc. VMM FOCM)

Information gathering

Gather information about the current block devices lsblk -f

Preview current LVM configuration pvs; vgs; lvs

Discover LVM physical volumes pvscan

Partition new disk

Create a single partition spanning on whole disk

fdisk /dev/sdd #n - new partition, p - primary,

#1 - first partition, defaults to use all disk, w - write to partition table

Re-discover LVM volumes

pvscan

Create PV Physical Volume

The following command initializes /dev/sdd1 for use as LVM physical volumes

pvcreate /dev/sdd1

Add new partition to existing VG volume group

vgextend vg00 /dev/sdd1

Create logical volume

lvcreate -L 5G -n swap2.vol vg00 #creates 5Gb logical volume in vg00 volume group lvcreate -L 5G -n swap2.vol vg00 /dev/sdd1 #creates logical volume using a specific PV /dev/sdd1 lvcreate -l 100%FREE -n swap2.vol vg00 /dev/sdd1 #creates logical volume using 100% of free space of a specific PV

Format the disk partition as swap

mkswap /dev/mapper/vg00-swap2.vol swapon -s

Add to swappable pool space

swapon /dev/mapper/vg00-swap2.vol

List swappable pool space to verify

swapon -s

Add disk to be auto-mounted

Backup fstab automount

cp /etc/fstab /etc/fstab.bak

Automatically mount during boot by adding one of the lines below

vi /etc/fstab UUID=###################### swap swap defaults 0 0 or /dev/mapper/vg00-swap2.vol swap swap defaults 0 0

Reboot, it can take around ~5 minutes if SELinux needs audit resources.

Partitions / Raid / LVM etc

Disk Commands

df -h- shows disk spacesudo fdisk -l- shows hard drive partitionsls -l /dev/sd*- SHOWS ALL DRIVEScat /proc/mdstat- SHOWS LINUX RAID DRIVES IN USEpvdisplay- SHOWS PHYSICAL VOLUMESlvdisplay- SHOW LOGICAL VOLUM DISPLAY

Provisioning Filesystems (Extra Storage)

Provision storage while sudoserver is online.

fdisk -l- reveals connected disks and partitionsdf -h- shows amount of memory used/dev/sdb- unpartitionedmklabel- type MSDOs - if needed.sudo parted- partition toolselect /dev/sdb- selects disk -mkpart primary 1 10GB- 10GB partitionprint- shows disks in partedquit- to leave parted

mke2fs- overlay filesystem on new partition.mke2fs -t ext4 -j /dev/sdb1- creates file system.

or

sudo mkfs.ext4 -j /dev/sdb1/- same as above.

mount /dev/sdb1 /projectx/10gb.- create mount pointmount- shows all system mounts.sudo blkid- shows partition uuid for stab.sudo nano /etc/fstab- edits stab.UUID="number" /projectx/10GB ext4 defaults- stores in fstab.

Provision SWAP storage on demand

Ability for kernel to extend RAM via disk.

free -m- determines current stare of storage.top- also shows SWAP info.

sudo fdisk -l- to identify partition spaceparted /dev/sdb- places in context of /dev/sdv/print- to show partition table.mkpart primary linux-swap 10GB 12GBstarting from the 10GB first block moving up.set 2 swap on- turns partitions to swap and on.sudo fdisk -l /dev/sdb- confirms swap allocation.sudo mkswap /dev/sdb2- overlays SWAP filesystem and displays UUID (FSTAB)sudo blkid- shows all UUID'ssudo nano /etc/fstab- opens stab for editing for SWAP reference using UUID.UUID "" NONE swap sw 0 0- for nano file.swap on -s- displays current swap situationsudo swap on -a- turns on swap storage.free -m || top || swapon -s- to confirm configuration.

Extend Swap in Ubuntu

Tested in Ubuntu Desktop 20.04, live.

Note: Consider an alternative location for the swap file in /var/cache/swap/swapfile with permissions 0600.

# Verify current swap size. This shows a sum of all swaps types available for the OS.

free -h

total used free shared buff/cache available

Mem: 15Gi 11Gi 750Mi 1.3Gi 3.2Gi 2.4Gi

Swap: 2.0Gi 1.6Gi 376Mi

# Show all swap spaces files and partitions

swapon -s

Filename Type Size Used Priority

/swapfile file 2097148 1704924 -2

# In this example it is a file based swap

ls -l /swapfile

-rw------- 1 root root 2147483648 Jul 18 2020 /swapfile

# Disable swap before manipulating

sudo swapoff -a # switch off using swapfile

# Provision bigger swap 'dummy file'

sudo dd if=/dev/zero of=/swapfile bs=1M count=8192

8192+0 records in

8192+0 records out

8589934592 bytes (8.6 GB, 8.0 GiB) copied, 26.5438 s, 324 MB/s

# Turn the file into swap filesystem

sudo mkswap /swapfile

Setting up swapspace version 1, size = 8 GiB (8589930496 bytes)

no label, UUID=a491769b-e088-4a21-8a88-94e001f9f54c

# Enable swap space

sudo swapon /swapfile # or use -a flag to enable all swap spaces eg. if multiple files are in use

# List the swap size

sudo swapon /swapfile -s

Filename Type Size Used Priority

/swapfile file 8388604 58624 -2

# Optional adjust fstabs

sudo vi /etc/fstab

/swapfile none swap sw 0 0

Storage Management LVM (Logical Volume Management)

Volume sets based on various disparate storage technologies.

Common configuration - raid hardware (redundancy) / LVM overlaying RAID config (aggregation)

Ability to extend, reduce, manipulate storage on demand.

LVM storage hierarchy:

Volume Group (Consists of 1 or more physical volumes)

- Logical Volume(s)

- File System(s)

6 steps to LVM setup

Appropirate 1 or more LVM partitions

sudo parted /dev/sdb (parted) mkpart extended 13GB 20GB (parted) print (parted) mkpart logical lvm 13GB 20GB (parted) select /dev/sdc/ (parted) mklabel msdos (parted) mkpart primary 1GB 20GB (parted) set 1 lvm on (parted) print 20GB on SDC and 7GB on SDB

Partition

sudo pvcreate /dev/sdb5 /dev/sdc1- allocates LVM partitions as physical volumessudo pvdisplay- shows LVM physical volumes.sudo vgcreate volgroup001 /dev/sdb5 /dev/sdc1- aggregates volumes to volume groupsudo lvcreate -L 200GB volgroup001 -n logvol001- creates logical volumessudo lvdisplay- shows logical volumessudo mk3fs -t ext -j /dev/volgroup001/logvol001- overlays EXT4 filesytem.Mount filesystem and commit changes to fstab

sudo lvrename volgroup001 logvol001 volgroup002- renames volume group. (Remember to edit fstab file or unmount)sudo lvresize -L 25GB /dev/volgroup001/logvolvar- Resize logical volume by 5GB from 20GBsudo resize2fs /dev/mapper/volgroup001-logvolvar 25G- Resize filesystem after increasing memory.sudo lvremove /dev/volgroup001/logvolvar- Remove volume completely. (Umount first)sudo parted /dev/sdc- Add or assign more partitions to volume group LVM.printmkpart primary 20GB 25GBprintset 2 lvm onprintsudo pvcreate /dev/sdc2sudo pvdisplaysudo vgextend volgroup001 /dev/sdc2- add new PV to volume group

Shrink LVM volume

ubuntu@ubuntu:~$ sudo -i

root@ubuntu:~# lsblk -f

NAME FSTYPE LABEL UUID FSAVAIL FSUSE% MOUNTPOINT

loop0 squashfs 0 100% /rofs

loop1 squashfs 0 100% /snap/core18/2128

loop2 squashfs 0 100% /snap/gnome-3-34-1804/72

loop3 squashfs 0 100% /snap/gtk-common-themes/1515

loop4 squashfs 0 100% /snap/snapd/12704

loop5 squashfs 0 100% /snap/snap-store/547

sda

└─sda1 vfat UBUNTU 20_0 70A4-0BA4 4.6G 38% /cdrom

nvme0n1

├─nvme0n1p1 vfat 37A4-7AB6

└─nvme0n1p2 LVM2_member E6W8Ty-ZE50-Y4g1-2h4m-hQT9-Cz2j-JZojI9

├─vgubuntu-root

│ ext4 1904342e-d7a4-4921-b8f6-a2baab75b743

└─vgubuntu-swap_1

swap 123e6bb3-c345-44a9-817e-4d47c1db3684

root@ubuntu:~# pvs

PV VG Fmt Attr PSize PFree

/dev/nvme0n1p2 vgubuntu lvm2 a-- 237.97g 24.00m

root@ubuntu:~# vgs

VG #PV #LV #SN Attr VSize VFree

vgubuntu 1 2 0 wz--n- 237.97g 24.00m

root@ubuntu:~# lvs

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

root vgubuntu -wi-a----- <237.00g

swap_1 vgubuntu -wi-a----- 976.00m

e2fsck -fy /dev/vgubuntu/root # /dev/vg/lv

e2fsck 1.45.5 (07-Jan-2020)

Pass 1: Checking inodes, blocks, and sizes

Pass 2: Checking directory structure

Pass 3: Checking directory connectivity

Pass 4: Checking reference counts

Pass 5: Checking group summary information

/dev/vgubuntu/root: 300174/15532032 files (3.1% non-contiguous), 6677301/62127104 blocks

resize2fs /dev/vgubuntu/root 220G

resize2fs 1.45.5 (07-Jan-2020)

Resizing the filesystem on /dev/vgubuntu/root to 57671680 (4k) blocks.

The filesystem on /dev/vgubuntu/root is now 57671680 (4k) blocks long.

root@ubuntu:~# lvreduce -L -15G /dev/vgubuntu/root

WARNING: Reducing active logical volume to <222.00 GiB.

THIS MAY DESTROY YOUR DATA (filesystem etc.)

Do you really want to reduce vgubuntu/root? [y/n]: y

Size of logical volume vgubuntu/root changed from <237.00 GiB (60671 extents) to <222.00 GiB (56831 extents).

Logical volume vgubuntu/root successfully resized.

# Resize the filesystem to full size of the lv volume

root@ubuntu:~# resize2fs /dev/vgubuntu/root

resize2fs 1.45.5 (07-Jan-2020)

Resizing the filesystem on /dev/vgubuntu/root to 58194944 (4k) blocks.

The filesystem on /dev/vgubuntu/root is now 58194944 (4k) blocks long.

# Resize swap partition

root@ubuntu:~# pvs

PV VG Fmt Attr PSize PFree

/dev/nvme0n1p2 vgubuntu lvm2 a-- 237.97g 15.02g

root@ubuntu:~# vgs

VG #PV #LV #SN Attr VSize VFree

vgubuntu 1 2 0 wz--n- 237.97g 15.02g

root@ubuntu:~# lvs

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

root vgubuntu -wi-a----- <222.00g

swap_1 vgubuntu -wi-a----- 976.00m

root@ubuntu:~# lvextend -L +15G /dev/vgubuntu/swap_1

Size of logical volume vgubuntu/swap_1 changed from 976.00 MiB (244 extents) to 15.95 GiB (4084 extents).

Logical volume vgubuntu/swap_1 successfully resized.

root@ubuntu:~# pvs;vgs;lvs

PV VG Fmt Attr PSize PFree

/dev/nvme0n1p2 vgubuntu lvm2 a-- 237.97g 24.00m

VG #PV #LV #SN Attr VSize VFree

vgubuntu 1 2 0 wz--n- 237.97g 24.00m

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

root vgubuntu -wi-a----- <222.00g

swap_1 vgubuntu -wi-a----- 15.95g

Reboot or mount root lvm to verify